Documentation Index

Fetch the complete documentation index at: https://braintrust.dev/docs/llms.txt

Use this file to discover all available pages before exploring further.

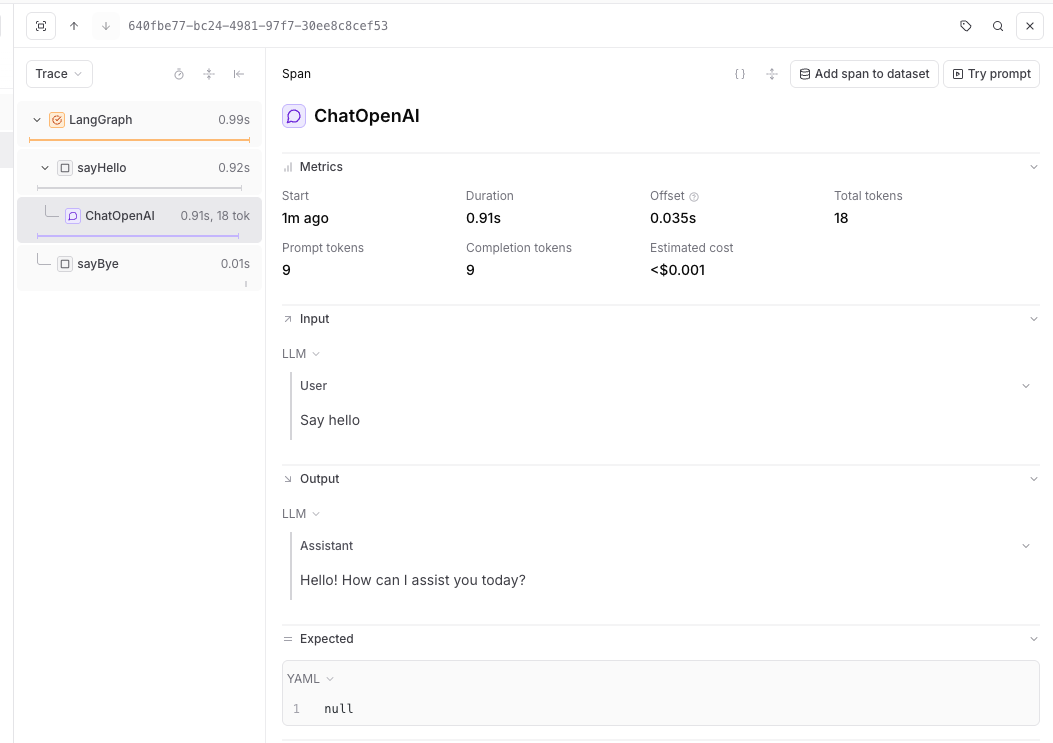

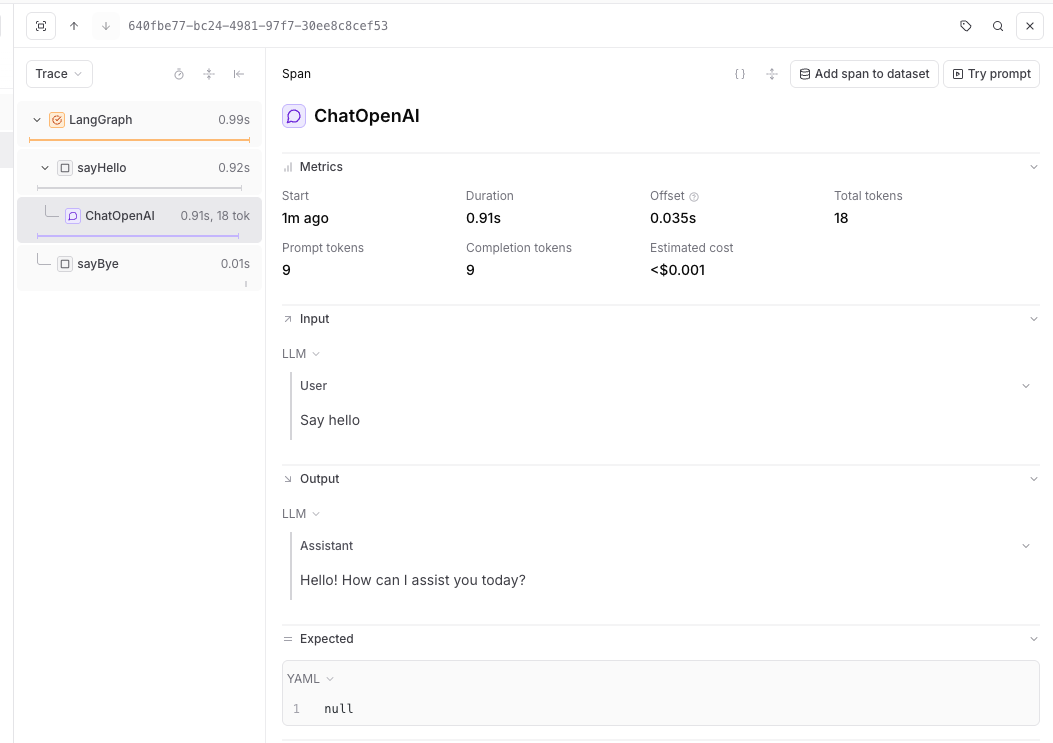

LangGraph is a library for building stateful, multi-actor applications with LLMs. Braintrust traces LangGraph applications through the LangChain callback system, capturing graph execution, node transitions, and model calls.

Setup

Install LangGraph alongside Braintrust and the LangChain packages you use:

# pnpm

pnpm add braintrust @braintrust/langchain-js @langchain/core @langchain/langgraph @langchain/openai

# npm

npm install braintrust @braintrust/langchain-js @langchain/core @langchain/langgraph @langchain/openai

Trace with LangGraph

Calling braintrust.auto_instrument() patches LangChain to register a global callback handler, which LangGraph also uses. See Trace LLM calls for details about auto-instrumentation.

Python auto-instrumentation

from typing import TypedDict

import braintrust

braintrust.auto_instrument()

braintrust.init_logger(project="My Project")

from langchain_openai import ChatOpenAI

from langgraph.graph import END, START, StateGraph

class GraphState(TypedDict, total=False):

message: str

def main():

model = ChatOpenAI(model="gpt-5-mini")

def say_hello(state: GraphState):

response = model.invoke("Say hello")

return {"message": response.content}

def say_bye(state: GraphState):

return {"message": f"{state.get('message', '')} Bye."}

workflow = (

StateGraph(state_schema=GraphState)

.add_node("sayHello", say_hello)

.add_node("sayBye", say_bye)

.add_edge(START, "sayHello")

.add_edge("sayHello", "sayBye")

.add_edge("sayBye", END)

)

graph = workflow.compile()

result = graph.invoke({})

print(result)

if __name__ == "__main__":

main()

Manual callback setup

If you want explicit control over the LangChain handler, configure it directly:

import {

BraintrustCallbackHandler,

setGlobalHandler,

} from "@braintrust/langchain-js";

import { END, START, StateGraph, type StateGraphArgs } from "@langchain/langgraph";

import { ChatOpenAI } from "@langchain/openai";

import { initLogger } from "braintrust";

const logger = initLogger({

projectName: "My Project",

apiKey: process.env.BRAINTRUST_API_KEY,

});

const handler = new BraintrustCallbackHandler({ logger });

setGlobalHandler(handler);

type HelloWorldGraphState = Record<string, unknown>;

const graphStateChannels: StateGraphArgs<HelloWorldGraphState>["channels"] = {};

const model = new ChatOpenAI({

model: "gpt-5-mini",

});

async function sayHello(_state: HelloWorldGraphState) {

const res = await model.invoke("Say hello");

return { message: res.content };

}

function sayBye(_state: HelloWorldGraphState) {

console.log("From the 'sayBye' node: Bye world!");

return {};

}

async function main() {

const graphBuilder = new StateGraph({ channels: graphStateChannels })

.addNode("sayHello", sayHello)

.addNode("sayBye", sayBye)

.addEdge(START, "sayHello")

.addEdge("sayHello", "sayBye")

.addEdge("sayBye", END);

const helloWorldGraph = graphBuilder.compile();

await helloWorldGraph.invoke({});

}

main();

Resources